PhDays - GDALR: An Efficient Model Duplication Attack On Black Box Machine Learning Models

Nikhil Joshi, Rewanth Tammana

Abstract

Trained machine learning models are core components of proprietary products. Such products are either delivered as a software package (containing the trained model) or they are deployed on cloud with restricted API access. In this ML-as-a-service method, users are charged on a per-query or per-hour basis hence generating the revenue for model owners. Models deployed on cloud can be vulnerable to model duplication attacks. There is a way to exploit these services and clone the functionalities of the black-box models hidden in the cloud by making continuous requests to the APIs. In the worst-case scenario, the attackers can sell the cloned model or use them in their business model.

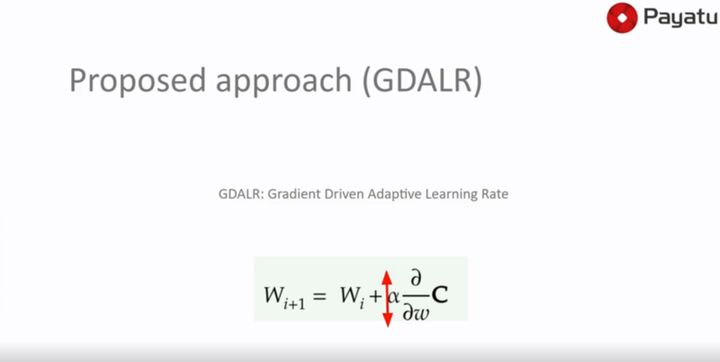

The speakers will present a modification to the traditional approach commonly used by attackers to train their models. Their research explains the serious need to rewrite the current countermeasures for MLaaS, an obligatory and interesting area for future work.

Date

May 21, 2019 12:00 AM

Senior Security Architect

Rewanth Tammana is a security ninja, open-source contributor, and an independent consultant. Previously, Senior Security Architect at Emirates NBD (National Bank of Dubai). He is passionate about DevSecOps, Cloud, and Container Security. He added 17,000+ lines of code to Nmap (famous as Swiss Army knife of network utilities). Holds industry certifications like CKS (Certified Kubernetes Security Specialist), CKA (Certified Kubernetes Administrator), etc. Rewanth speaks and delivers training at multiple international security conferences around the world including Black Hat, Defcon, Hack In The Box (Dubai and Amsterdam), CRESTCon UK, PHDays, Nullcon, Bsides, CISO Platform, null chapters and multiple others. He was recognized as one of the MVP researchers on Bugcrowd (2018) and identified vulnerabilities in several organizations. He also published an IEEE research paper on an offensive attack in Machine Learning and Security. He was also a part of the renowned Google Summer of Code program.